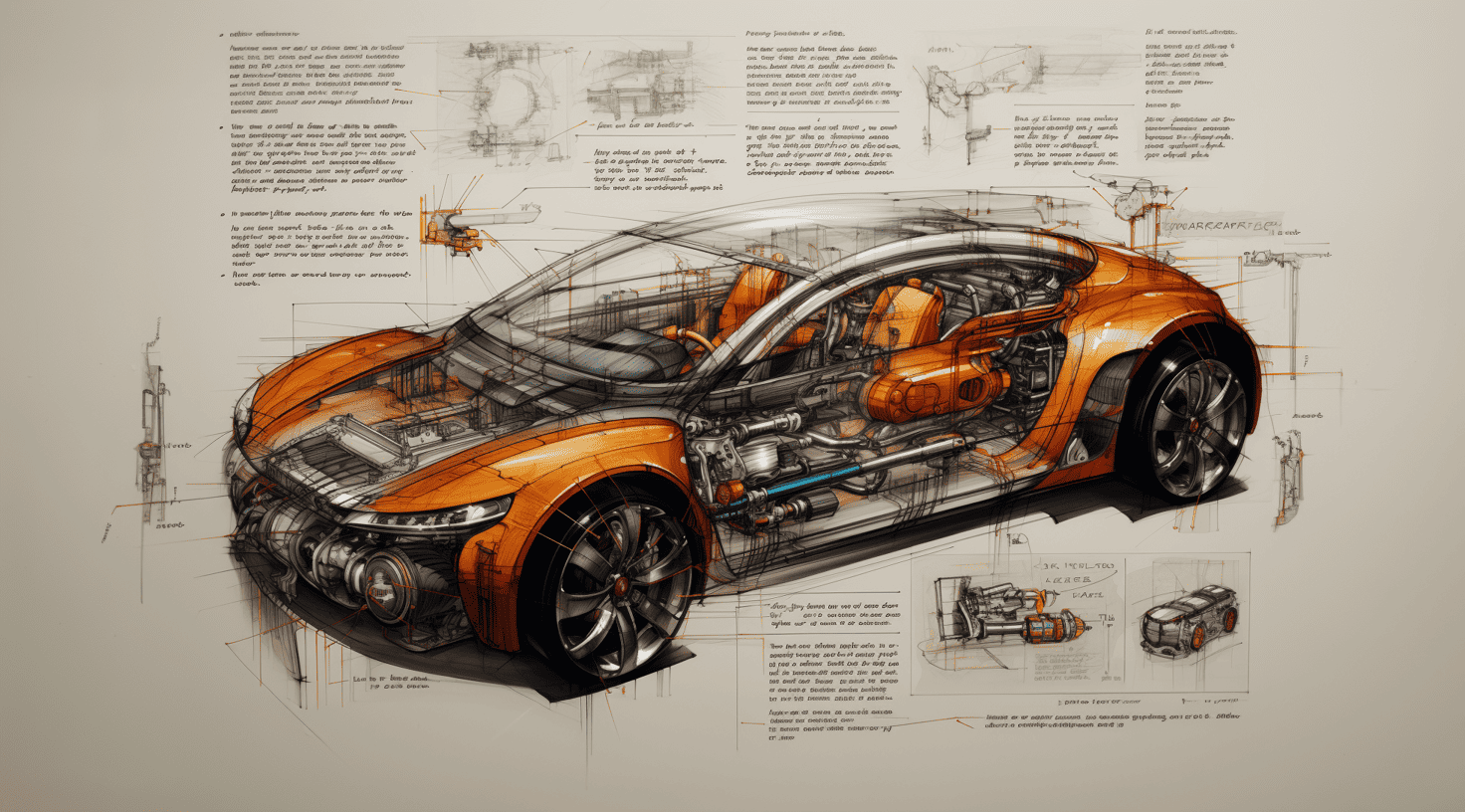

Eye2Drive’s Solutions for the Automotive Industry

In today’s landscape, imaging technology excels in various attributes such as speed, color fidelity, and high dynamic range (HDR). However, technology needs to improve adaptability to diverse lighting conditions, a particularly problematic constraint in automotive and AI-driven applications. While current solutions for the automotive market rely on adding redundant technologies like LIDAR and RADAR to improve safety, these additions increase complexity and cost.

EYE2DRIVE is introducing a new approach to imaging technology. Our unique intellectual property enables us to create a dynamic imaging sensor that can adjust in real-time to the environment it’s in. This technology mimics the adaptability and resilience of biological vision, ensuring that the data captured is always of high quality and contextually relevant. Our solution provides a cost-effective yet robust alternative for a wide range of applications.

Autonomous Navigation Issues We Solve

Autonomous vehicles rely heavily on vision systems to navigate safely and efficiently. However, these systems often encounter challenges that can hinder their effectiveness. Three of the most common issues are ghosting, flickering, and exposure problems. These issues can greatly impact the system’s ability to accurately interpret and respond to its surroundings. Let’s take a closer look at each of these challenges and why they are critical for the automotive market.

Ghosting

Ghosting in vision systems refers to the appearance of faint duplicate images offset from their original positions. This issue typically arises from motion between the sensor and the subject or from processing artifacts. Ghosting can interfere with high-precision image analysis, making it a concern in applications like autonomous driving. Conventional solutions often involve both hardware and software optimizations.

Flickering

Flickering in autonomous vehicle vision systems refers to rapid variations in image brightness, often due to inconsistent lighting or sensor limitations. Flickering can impair the navigation system’s data interpretation and affect features like lane detection and object recognition. Traditional solutions typically involve hardware and software enhancements to stabilize image capture. Dynamic sensors are immune from flickering.

Exposure

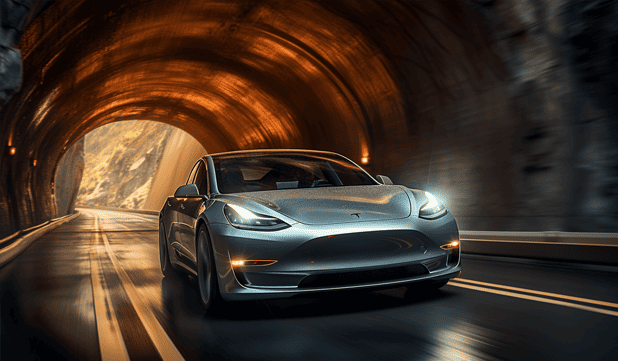

Exposure challenges in automotive vision systems stem from rapidly changing lighting conditions. Key issues include handling high-contrast scenarios like tunnels, coping with glare from sunlight or headlights, managing low-light conditions, and adapting to quick transitions between bright and shaded areas. These challenges can compromise image quality, affecting the system’s ability to make accurate decisions.

Challenges of the Automotive Industry Where Our Sensors Shine!

The ability to interpret the environment accurately is crucial in the rapidly evolving world of autonomous vehicles and the entire automotive market. However, traditional imaging systems often struggle in challenging scenarios like HDR imaging and LED signals, creating a demand for more advanced vision systems. Artificial Intelligence-powered adaptive imaging devices are emerging as a solution to these challenges. These devices can dynamically adjust their sensitivity and processing capabilities to ensure high-quality image acquisition even in the most demanding conditions.

HDR

When faced with challenging conditions, conventional imaging devices acquire multiple images at different exposures and combine them into a single HDR image. This process can generate ghosting artifacts, making interpretation difficult. Adaptive imaging devices are intrinsically immune from this issue.

Sun

On a very sunny day, capturing the road ahead and the surrounding area of an autonomous vehicle can be difficult for a traditional imaging device. Under these conditions, only adaptive imaging devices that leverage intelligent imaging acquisition can perform and meet the more stringent requirements.

Fog

A foggy environment can make it hard for conventional vision devices and systems to detect other vehicles and adequately identify road signals and lanes.

Only new adaptive imaging devices can adjust their sensitivity to extract the highest-quality and most critical information from a scene.

Rain

Heavy rain, in particular, can make it very difficult for the vision system of driverless cars to make the best decisions to ensure the vehicle’s and its passengers’ safety. The new dynamic imaging devices can handle most of those issues, supporting the navigation system even under challenging conditions.

Shadows

Traditional vision systems can find it challenging to process situations with a strong contrast between areas illuminated by the sun and those in shadow. Adaptive imaging devices can handle these situations without switching to HDR and the well-known ghosting issues it will bring.

Tunnels

As with the human eye, entering or exiting a tunnel can temporarily blind a conventional vision device, negatively impacting the navigational system’s decision-making process. Dynamic imaging sensors can adapt to drastic changes in light conditions in real-time.

Traffic

Trafficated areas with multiple vehicles, visual signals, and light reflections can pose additional challenges to traditional vision devices. Only new-generation imaging sensors can handle the scene’s complexity, feeding the navigational system with high-quality images.

Pedestrian

Autonomous vehicles must identify all pedestrians on the scene, even in the most challenging conditions, to ensure their safety and the safety of the car passengers. Conventional imaging systems can struggle to capture the scene adequately.

Traffic Lights

Traffic lights, mainly LED ones, can be challenging for traditional vision systems. Factors like their position, flickering light intensity, direct sunlight, and reflections can throw these systems off. Dynamic imaging sensor technology can more efficiently manage these challenging conditions.

LED Headlights

As LED headlights become more common, traditional vision systems face new challenges. The type and shape of lights, flickering, and reflections can easily confuse conventional vision systems. New imaging sensors, driven by AI, can adapt to these conditions and capture high-quality images in real time.

LED Rear Lights

Just as headlights present unique challenges for conventional vision systems, LED rear lights also introduce their complexities and challenges for the sensors.

Only new dynamic vision technologies allow for the accurate detection and identification of red lights in all road conditions.

LED Signals

Autonomous vehicles must detect and identify all road signals, including LED signals, to stay within speed limits and be alerted of unpredictable road conditions. Adaptive and dynamic imaging sensors, driven by Artificial Intelligence, are the only ones up to the task.